The Intelligence Wealth Gap

How AI Might Create A Permanent Cognitive Underclass

A growing body of credible research is beginning to point in the same direction. AI is changing how we work, but it is also reshaping how we think.

Vivienne Ming, a cognitive scientist and AI researcher, recently described a divide between people who use AI to deepen their thinking and those who rely on it to replace it. In her words, most people are defaulting to substitution. They are outsourcing effort instead of extending their capability.

“The overwhelming trend is substitution,” Ming said in a recent interview. Instead of using AI to deepen their reasoning, most people are outsourcing it.

That signal is becoming harder to ignore.

Each interaction with AI is a small decision. It can reinforce how we think, question, and create. Or it can quietly reduce engagement, memory, and ownership of the work. Over time, those decisions begin to accumulate.

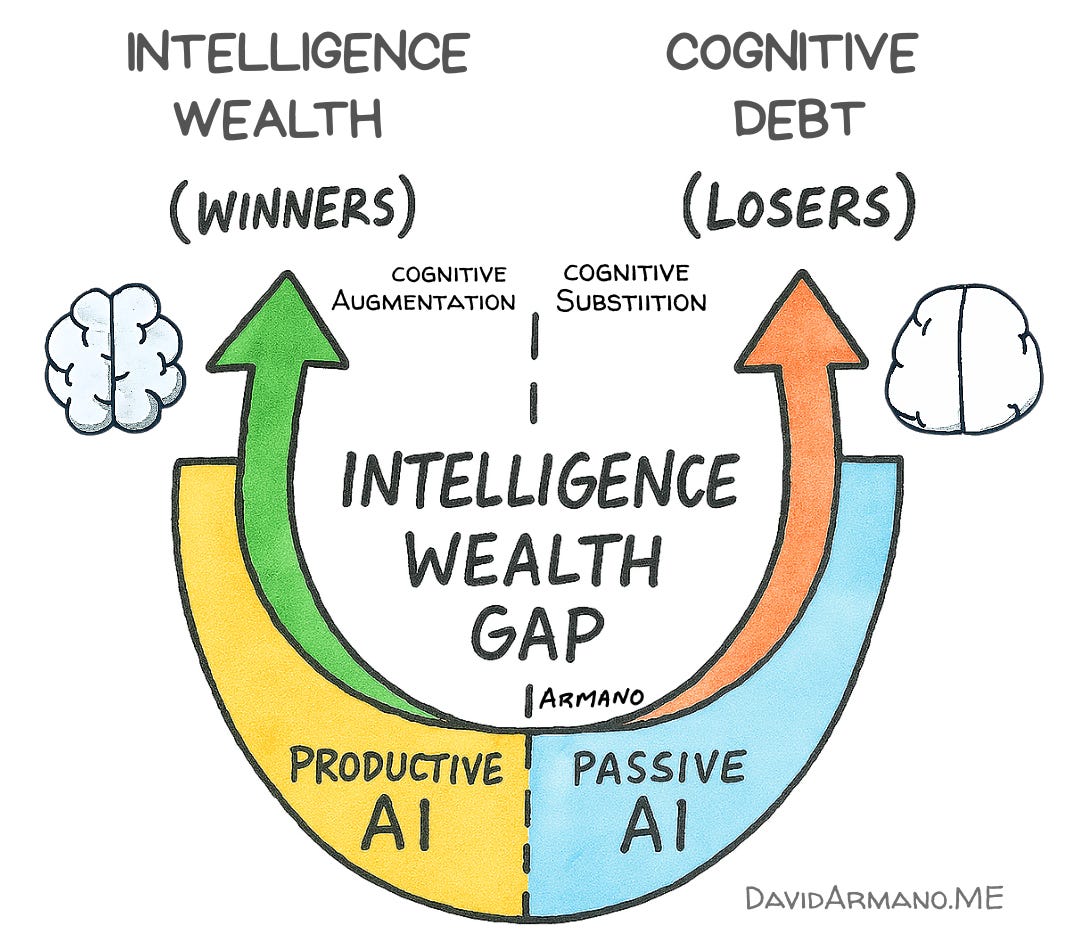

What emerges is not a simple usage gap. It is a compounding one. Some people are building what I call intelligence wealth. Their skills, judgment, and output improve as they work with these systems. Others are taking on cognitive debt. They save time in the moment, but their underlying capabilities weaken over time.

The distance between those two paths is beginning to widen. Early signals suggest it will not widen evenly. And this is going to be one of the societal challenges of our time.

This is the Intelligence Wealth Gap.

I first wrote about the Intelligence Wealth Gap in August 2025, nearly a year ago, when only the first data points were emerging about the effects of AI on our brains. In less than a year, we now regularly use an established term, but for a powerful new dynamic that surpasses any previous version:

Cognitive Offloading.

Cognitive offloading is the use of external tools or physical actions to reduce the mental effort required to complete a task. Instead of relying solely on your internal “biological hardware” (your brain), you “offload” the work to your environment to free up cognitive resources. While offloading has always been part of being human (e.g., using a map or a calculator), the current shift involves offloading thought itself to AI.

”Classic” Examples of Cognitive Offloading Include:

Memory: Using a calendar or note-taking app instead of memorizing dates.

Navigation: Relying on GPS rather than mental mapping.

Computation: Using a calculator for math.

It’s tempting to put LLMs and AI into the perspective of previous technologies, such as a GPS, which does erode our ability to navigate manually, but with AI, we’re getting into thought and thinking itself, in every capacity of how we measure intelligence. Intelligence wealth and cognitive debt present a risk-reward scenario that may be the highest-stakes technology gambit humankind has encountered to date.

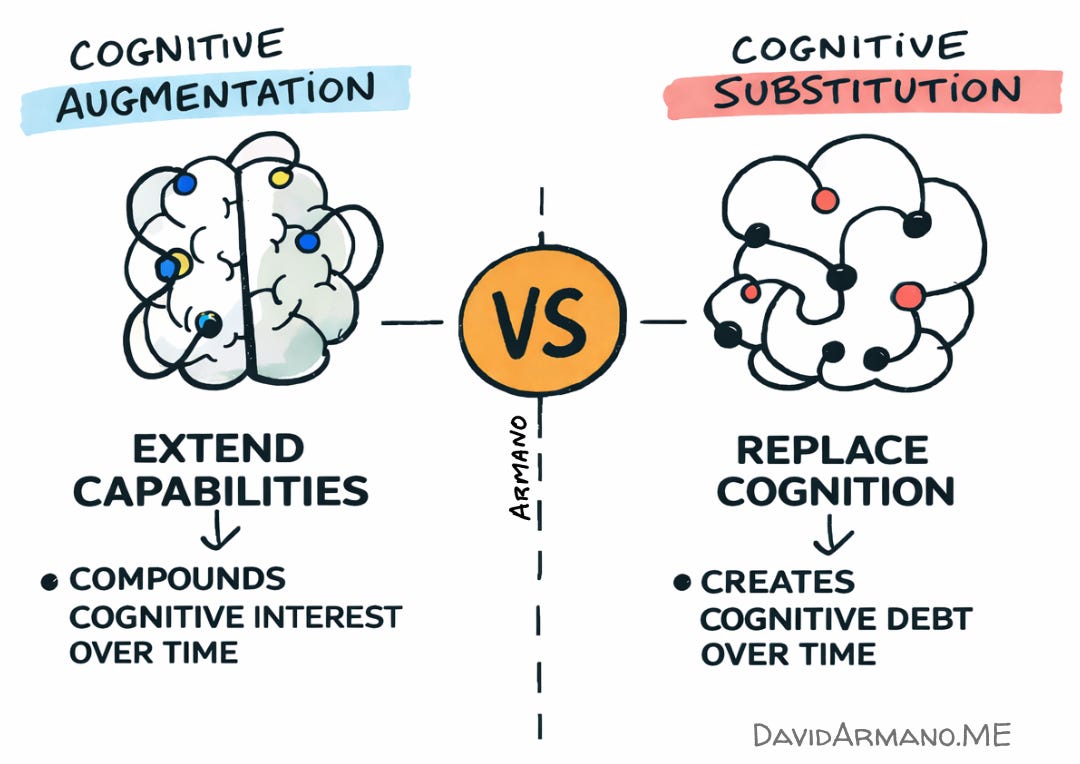

Cognitive Augmentation vs. Substitution

Two models are emerging with how we are integrating AI into our work and lives: augmentation and substitution, and they are not the same thing. If you are reading this newsletter, you’re experiencing cognitive augmentation at work, from the words you are reading to the visualizations that accompany this piece and others. I collaborate with AI on my visualizations, for example, but I often start with a sketch. The thinking is mine, and the refinement is done with AI. Same with my writing—I drive all the thinking and write a significant amount of the written word, and AI augments my abilities.

Augmentation is the use of a tool to extend your capabilities (e.g., a scientist using AI to analyze data faster). Substitution is using a tool to replace a skill you haven't mastered (e.g., a student using AI to write an essay they couldn't write themselves):

Building on that distinction, recent research from Psychology Today adds a sobering layer to the "Intelligence Wealth Gap," particularly regarding the next generation. While adults may face "cognitive atrophy"—the weakening of a skill they once possessed—children are at risk of cognitive foreclosure, where the foundational mental muscles for reasoning and judgment are never developed in the first place. Think of this like a bone that has never borne weight. If a child never learns to "structure a thought" because an agent does it, they don't just lose a skill—they lose the mental infrastructure for logic. This isn't just about saving time; it’s a form of Cognitive Debt in which the efficiency of the present is borrowed from the future's capabilities. When we substitute AI for the "micro-judgments" required to learn a craft, we create a learning trap: performance might spike while the tool is active, but true mastery remains a mirage, leaving the individual stranded the moment the "algorithmic crutch" is removed.

As The Intelligence Wealth Gap Widens, a Cognitive Underclass Will Rise

We are witnessing the birth of a permanent cognitive underclass. While the “Intelligence Wealthy” use AI as a high-octane fuel to accelerate their critical thinking, a much larger demographic is unwittingly taking on a life sentence of Cognitive Debt. By treating AI as a replacement for effort rather than an extension of it, we aren’t just saving time—we are atrophying the very mental muscles that define human agency.

For the next generation, this cognitive foreclosure goes well beyond growing up with an iPad (something my children did). We may be raising the first generation of “cognitively outsourced” humans who might never develop the baseline reasoning required to realize they are trapped. The gap is no longer just about what you know; it’s about whether you still have the capacity to think for yourself without an algorithmic permission slip.

I’m old enough to remember the stories of tech titans who famously did not allow their families to use the technologies they invented or helped create:

Steve Jobs: In a late-2010 interview with The New York Times, Jobs famously admitted that his children had never used the iPad, which had just launched. He stated, “We limit how much technology our kids use at home.” Instead of screens, the family spent evenings having long dinners discussing history, books, and ideas.

Mark Zuckerberg: Zuckerberg has long maintained that he does not let his young children use Facebook or Instagram. During a landmark trial over social media addiction in early 2026, he testified that enforcing age limits is difficult but noted that Meta had introduced “Teen Accounts” with strict defaults. Historically, he and his wife, Priscilla Chan, have stated they prefer their children to play outside or code rather than consume social media.

Bill Gates banned cellphones at the dinner table; Shou Zi Chew of TikTok does not have his children on the app, citing that they are too young; and Evan Spiegel from Snap limits his children’s screen time to just an hour and a half per week.

These are all highly successful people who have built both real wealth and intellectual wealth. They all practice a form of intellectual asset protection. I’m going to assume that many of the tech elite who are pioneering AI will also limit or control the ways their children use this emerging technology. They aren't just protecting their children's eyes from blue light; they are protecting their children's ability to compete in a world where thinking is the only remaining scarcity.

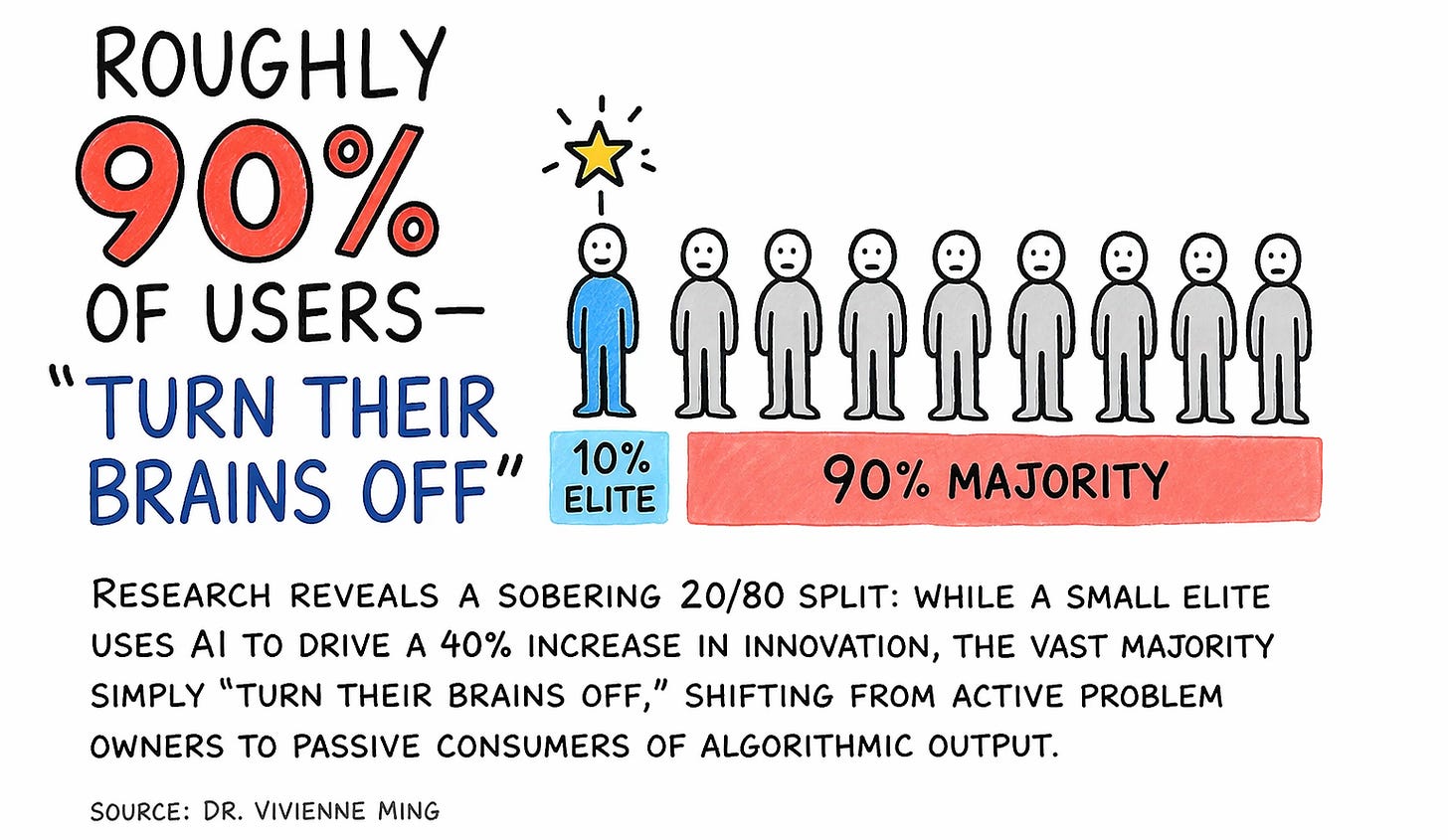

The stakes of this divide are perhaps best summarized by the aforementioned neuroscientist Dr. Vivienne Ming, who warns that we are currently designing technology that "flatters our laziness" rather than sharpening our intellect. Her research reveals a sobering 20/80 split: while a small elite uses AI to drive a 40% increase in innovation, the vast majority—roughly 90% of users—simply "turn their brains off," shifting from active problem owners to passive consumers of algorithmic output.

The Intellectual Curiosity Compass

This is the ultimate caution as we navigate the Intelligence Wealth Gap. If we continue to favor the “shallow efficiency” of substitution over the “productive friction” of augmentation, we aren’t just saving time; we are hollowing out the human capacity for deep inquiry. As Ming notes, for the modern knowledge worker, “GPT is the new GPS”—but even a GPS is useless if you don’t know where you want to go.

I believe that intellectual curiosity is part of the solution.

The relationship between curiosity and intelligence is one of the most consistent findings in psychology. While "intelligence" is often measured by what you can do (cognitive ability), "curiosity" is seen as a driver of what you will do (intellectual engagement). Researchers often refer to curiosity as the "Third Pillar" of academic performance, alongside intelligence (IQ) and effort (Conscientiousness). Intelligence provides the raw processing power, but curiosity determines the direction and persistence of that power. As Albert Einstein—someone often cited as the pinnacle of intelligence—famously put it:

"I have no special talents. I am only passionately curious."

Intellectual curiosity (IC) is the only force strong enough to resist the pull of cognitive offloading. It is the “why” that keeps us from blindly following the “how” of an algorithm. In an age of substitution, the ultimate competitive advantage isn’t how well we use the tool—it’s how much of the original thought we refuse to give up. We must adapt intelligently, finding the balance between the efficiency of the machine and the messy, rewarding friction of our own minds.

The future is TBD, but those who cultivate their curiosity will be the ones who build intelligence wealth, and can still cognitively navigate the world when AI goes dark.

Visually yours,

Did you enjoy what you just read? Please consider forwarding it to a friend or five.

Want more insight? The TBDaily is a human-curated, AI-automated daily briefing that provides fresh takes on what’s shaping the future of AI, Technology, Culture, Work, Health, and more. The TBDaily is trained by me to see around corners, and to help future-proofers like you stay curious, inspired, and informed. The future is TBD. Get up to speed daily.

David Armano is a futurist, strategist, and Enterprise AI transformation leader who helps his colleagues, clients, and community solve intricate business challenges and see a clear path forward.

He’s known for his unique approach to visual thinking and for insightful yet grounded takes on intelligent experiences, culture, and leadership. In addition to his day job, he writes David by Design to translate complex shifts into actionable ideas.

Brilliant piece, Dave. Thank you.

Dave, I am literally writing this comment while on a car trip with my teenagers, having just come to a detente over how effed up their world will become, because us "old people" are using technology that will replace intelligence as we know it. The point I was trying to make is that AI isn't going away; they can't avoid using it, but it must be applied in a way that maintains and enhances their creativity, critical thinking, and judgement. But then I wanted to explain how that could happen, with it being so prevalent now in education and literally wiping out entry-level work as we know it, and came up short of how.

We will need to be deliberate about sharpening and maintaining the sharpness of these capacities. That means mandatory humanities education in higher education--ESPECIALLY for the STEM majors. Mandatory unaided tasks for entry level (I realize how hard this will be to enforce in the public sector), and mandatory specialty testing for senior-level practitioners in medicine, law, engineering, any highly-skilled knowledge work. Maintaining skills should be tied to compensation.

The critical component we have to preserve at all costs is human intelligence.